Five Google Filtering Penalties, Which Are You Suffering From?

Contents

Google has expectations of content and link building practices that are considered natural. By going outside the boundaries of what is considered normal, your pages get a filtering penalty, so they don’t show in Google’s SERPs. It’s likely there are dozens of these types of filters. We’re going to discuss a few of them in this article that are the most common.

#1 – Boilerplate Links From Irrelevant Sites

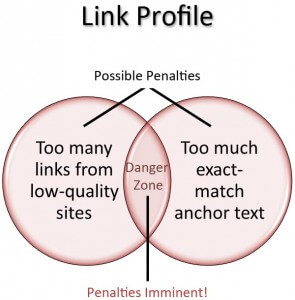

The fastest way to get your site dropped from Google, bar none — is to get a site-wide link with an exact-match keyword from an irrelevant site. This tactic will typically fry your rankings in 3 days or less.

The fastest way to get your site dropped from Google, bar none — is to get a site-wide link with an exact-match keyword from an irrelevant site. This tactic will typically fry your rankings in 3 days or less.

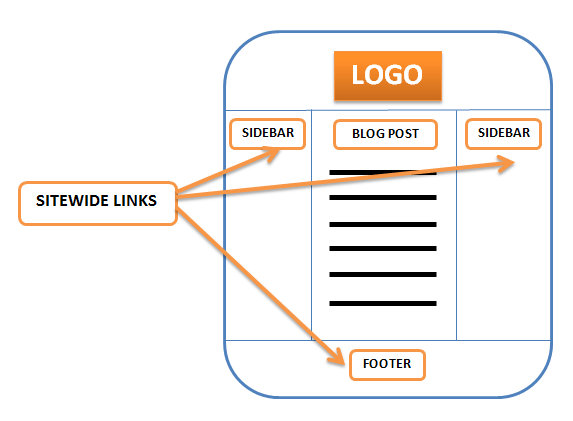

A boilerplate section of a site is in areas that are the same on every page. These areas include the menu system, sidebars, header and footer areas that remain consistent on every page. Sometimes these areas contain blocks of text, or outgoing links (such as a blogroll). Google has no love for boilerplate sections of a site’s template.

Google has a patent on discovering boilerplate content, and another which completely discounts links from boilerplate sections of templates. A quote from that patent:

“The actions further include identifying a plurality of candidate resources linking to the site; discarding one or more of the candidate resources, each discarded candidate resource linking to the site only from a boilerplate section of the discarded candidate resource;”

In plain English, Google identifies links to a site from boilerplate sections of a template and discards them.

However, as I mentioned above — not only is Google discarding that boilerplate link, it typically comes with a huge filtering penalty — especially when that site-wide link is associated with an exact match keyword your page attempts to rank for. The penalty is particularly severe when the boilerplate link comes from a website of an unrelated niche.

I will say this however, a brand link from a relevant website can be helpful. I’ve received these before, from a related website (same niche), were that site also site-wide linked to other similar sites in their blogroll (dozens of sites). The end result is I ended up ranking #1 for my brand within a few days of receiving that boilerplate link. If you have the opportunity to get a site-wide link, make sure it is for your brand only, with no exact match keywords involved, and that the site is extremely relevant.

#2 – Keyword Stuffing, On-Page Term Use vs Incoming Anchor Texts

This one is a very common problem. If you have a site where some pages are doing very well, while others aren’t even in the top 1000 search results, the problem could very well be keyword stuffing. I’ve done an extensive guide on keyword stuffing for on-page SEO, so I’ll only discuss this topic briefly here.

This one is a very common problem. If you have a site where some pages are doing very well, while others aren’t even in the top 1000 search results, the problem could very well be keyword stuffing. I’ve done an extensive guide on keyword stuffing for on-page SEO, so I’ll only discuss this topic briefly here.

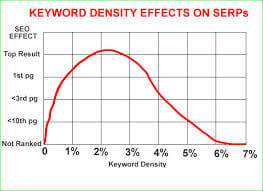

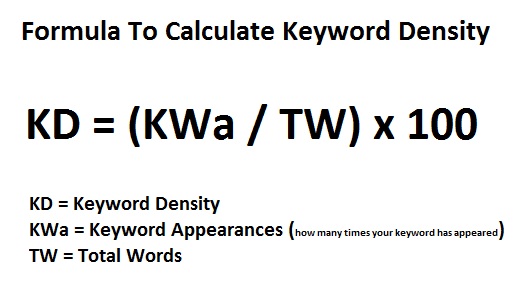

Your on-page keyword use, when considered with your incoming anchor texts from external links, are used to either give your page a boost in the SERPs, or completely filter your page — depending on whether your keywords are in a proper density range, or if they’re stuffed.

In general, your incoming links anchor texts should have a very low percentage of exact match keywords. I suggest no more than 5 exact match links out of 20, no more than 10 exact match links from 100, and from there on — use very few (if any) exact match keywords. If 200 links, perhaps 12 — 500, perhaps 20. The more links you get, the less exact match keywords will help (and the more they’ll hurt if they push your page into an over-optimization penalty).

In general, your incoming links anchor texts should have a very low percentage of exact match keywords. I suggest no more than 5 exact match links out of 20, no more than 10 exact match links from 100, and from there on — use very few (if any) exact match keywords. If 200 links, perhaps 12 — 500, perhaps 20. The more links you get, the less exact match keywords will help (and the more they’ll hurt if they push your page into an over-optimization penalty).

Your on-page keyword densities should be in the range of around .8 to 1.1% for a 1-word keyword, .7 to 1% for a 2-word keyword, and .5 to .7% for a 3-word keyword. That includes not only the density of your articles, but of your articles when added to whatever keywords the template injects in boilerplate sections of your site (the menu, sidebar, etc.)

Finally, keep in mind that your incoming anchor texts, and your on-page keyword use go hand-in-hand. If you end up with too many exact-match anchors from external links, to keep your page in balance you must lower your on-page keyword density. If you have very low incoming anchors for the exact match keywords (or none), you could get away with slightly higher keyword densities on your pages.

Finally, keep in mind that your incoming anchor texts, and your on-page keyword use go hand-in-hand. If you end up with too many exact-match anchors from external links, to keep your page in balance you must lower your on-page keyword density. If you have very low incoming anchors for the exact match keywords (or none), you could get away with slightly higher keyword densities on your pages.

If your pages aren’t found in Google at all, check your keyword densities and make sure you’re not stuffing keywords onto the page. This is one of the most common filtering penalties. Fortunately, it’s something that is completely under your control. It is easy to adjust keyword density, and Google is often very quick to correct penalties that were applied to a keyword stuffed page.

#3 – Heavy Link Rot

There is a term in SEO called “churn and burn”. The concept is that people use automated techniques to send a ton of links to their site — or they purchase a link package where someone does the same. This type of linking sometimes raises your rankings a little, your site plateaus in the search results, and then it completely disappears — not to be found in the top 1000.

There is a term in SEO called “churn and burn”. The concept is that people use automated techniques to send a ton of links to their site — or they purchase a link package where someone does the same. This type of linking sometimes raises your rankings a little, your site plateaus in the search results, and then it completely disappears — not to be found in the top 1000.

While this is partially due to the lower quality of the links, a majority of what makes the site “burn” is the inevitable link rot. One of the worst things that can happen to a page is to receive a link, which later disappears. It’s better to never have a link, rather than have one that goes away. This is known as link rot. It’s a sign that there’s something wrong with your site — because people stop linking to it. When this happens in bulk, Google sees it as a very bad ranking signal.

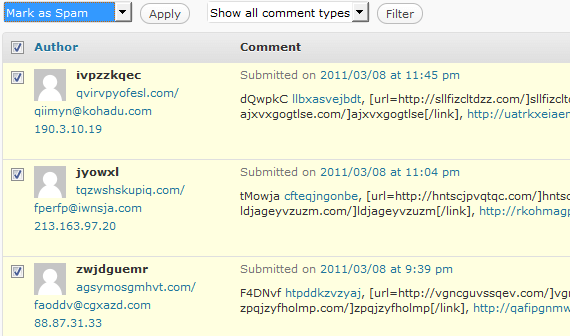

When automated software sticks a link in a comment section — it means that site accepts automated spam comments. As such, it will be inundated with these links. Eventually, hundreds or thousands of comments come rolling in, and as it does, older links get pushed to page 2. Either this, or the site goes offline — because it’s worth is dubious if it’s allowing automated spam comments. Once you receive a huge influx of low quality links, eventually those links will be gone. When that happens, your rankings will suffer.

When automated software sticks a link in a comment section — it means that site accepts automated spam comments. As such, it will be inundated with these links. Eventually, hundreds or thousands of comments come rolling in, and as it does, older links get pushed to page 2. Either this, or the site goes offline — because it’s worth is dubious if it’s allowing automated spam comments. Once you receive a huge influx of low quality links, eventually those links will be gone. When that happens, your rankings will suffer.

My motto is “Never build a link you think will disappear.” I avoid link rot like the plague. It gives your site stable rankings. That’s what it’s all about for me — I like to build sites and rankings that will remain consistent, instead of waking up one day to find the rankings have vanished.

#4 – Diversity Filtering

A definition of diversity filtering is this: When a page of content goes up, Google fetches it. They break the article down into its elements, deciphering the points it makes. Google takes these points, and compares them to other articles on the internet. If the page makes all the same points as other articles on the internet, and adds nothing new, that page is not “diverse”. As such, Google discards it.

A definition of diversity filtering is this: When a page of content goes up, Google fetches it. They break the article down into its elements, deciphering the points it makes. Google takes these points, and compares them to other articles on the internet. If the page makes all the same points as other articles on the internet, and adds nothing new, that page is not “diverse”. As such, Google discards it.

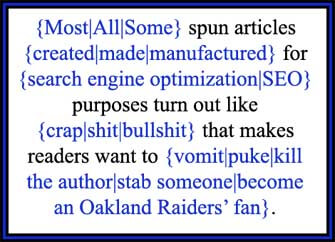

Let me state that I’m not just talking about duplicate content. I’m talking about re-written articles, that say the same thing (even though worded differently). This also includes the low quality article spins people make. Google can tell the difference if someone uses synonyms to construct an article that essentially says the same thing as another article on the internet (only worded differently).

If re-worded or lower quality articles (that make the same point as other pages on the internet) are found on your money site, it will be very hard to rank those pages. If Google finds dozens or hundreds of these lower quality articles, that are not diverse, on your blog network — it will discard the links to your site, and the benefit those links gave to your rankings.

If re-worded or lower quality articles (that make the same point as other pages on the internet) are found on your money site, it will be very hard to rank those pages. If Google finds dozens or hundreds of these lower quality articles, that are not diverse, on your blog network — it will discard the links to your site, and the benefit those links gave to your rankings.

Someone could have a 500-strong private blog network, and yet if those 500 sites all receive a lower-quality spun article, or lower quality re-written articles, that make the same points as other pages on the internet — that entire network is worthless. Those articles pass no value. It is far better to have 1 blog with diverse articles, than it is to have 500 that are all clones of each other. Unless the content is diverse, making different points than other articles on the internet, a blog will not pass benefit to your money site.

#5 – Fishy Factors

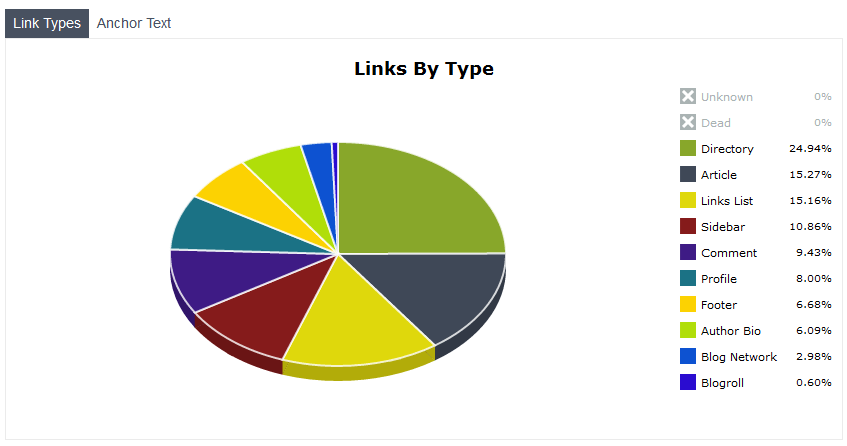

I’m categorizing many other things into this “fishy factors” section. Are all your incoming links do-follow, with none that are nofollow? That’s fishy. Do you have 1000s of brand links to your site, yet nobody even searches Google for your brand? That’s fishy. Do you have 1000s of links from certain platforms (say, free blog web2.0s), but no links from social networks? That’s fishy.

I’m categorizing many other things into this “fishy factors” section. Are all your incoming links do-follow, with none that are nofollow? That’s fishy. Do you have 1000s of brand links to your site, yet nobody even searches Google for your brand? That’s fishy. Do you have 1000s of links from certain platforms (say, free blog web2.0s), but no links from social networks? That’s fishy.

Keep in mind that Google has a team of college educated search engineers who have a job of stopping spammy sites. Can Google determine something is fishy about your site, when you have 1000 exact match links for a keyword that doesn’t even have 500 searches each month? Yes! You must go for diversity when building your site.

Get links from many sources. Free blogs, guest blog posts, outreaching to leaders in your niche for quality relevant links, social links from all the top social platforms such as Facebook, Twitter, Google+ and LinkedIn, these all add up to a site which has a natural link portfolio. You want to stay in the bounds of what is normal. Sometimes, less is more — especially when it comes to exact match keyword anchors in links.

User metrics are a strong part of avoiding the fishy factor. Google knows approximately how much traffic your site has. If there are thousands of links to your site with your brand anchor, but nobody even searches for your brand, and nobody visits your site except from Google itself — Google is unlikely to consider your link portfolio as being natural.

While there are many more filters that can keep your content from ranking in Google, the penalty filtering mentioned above accounts for a bulk of the problem. By addressing the issues above, you can see your content finally start ranking in the SERPs.

Comments