Avoid Looking Like a Spammer – How Google Detects User Generated Content Spam

When it comes to ranking, we all know that links are the lion’s share of what is required to climb the SERPs. There are some types of link generating activities, such as those created by automated software, that have stopped being as effective in recent years. The most glaring example would be comment spam, but also links from profiles, social media and more are implicated.

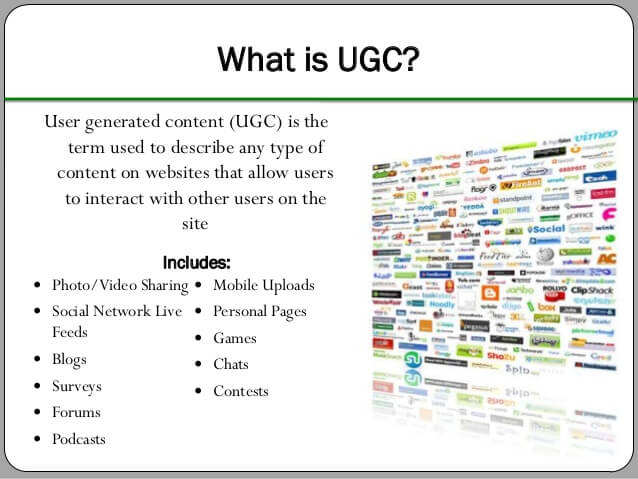

Are all blog comments spam, or just some? Certainly all social media links aren’t spam, though some are. How does Google distinguish between legitimate and spammy links? In May 2015, Google was issued a patent on how to detect social network spam. It also states in the patent that the same system “could be applied to any service that collects and posts user generated content“. What constitutes user generated content? Nearly everything! User generated content is free blogs, so not only can this be used on social networks, but all of the millions of blogs on Blogger, WordPress, Tumblr, etc.

Are all blog comments spam, or just some? Certainly all social media links aren’t spam, though some are. How does Google distinguish between legitimate and spammy links? In May 2015, Google was issued a patent on how to detect social network spam. It also states in the patent that the same system “could be applied to any service that collects and posts user generated content“. What constitutes user generated content? Nearly everything! User generated content is free blogs, so not only can this be used on social networks, but all of the millions of blogs on Blogger, WordPress, Tumblr, etc.

Lets take a look at all the different types of user generated content that are possible to create links from:

- Comments on Posts

- Free Blog Posts, such as Blogger, WordPress, Tumblr

- Every Social Network, Facebook, Twitter, Google+, LinkedIn

- Photo Directories like Pinterest and Instagram

- Forum Posts

- Articles on Wikis

- Article Directories

- Link Directories

Essentially, this applies to any method of generating links that you could generate through your own efforts, which is nearly all of them. It is a good idea then, to see just how Google detects spam on social networks and user generated content, so we can avoid that behavior and make our UGC and social links stick better. Following are points of the patent in italics, and my comments about each thereafter.

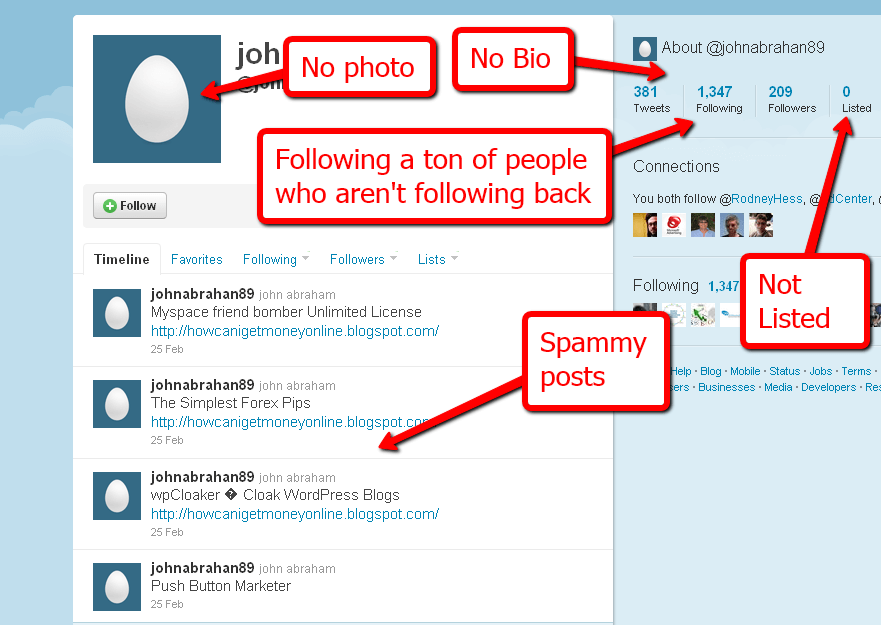

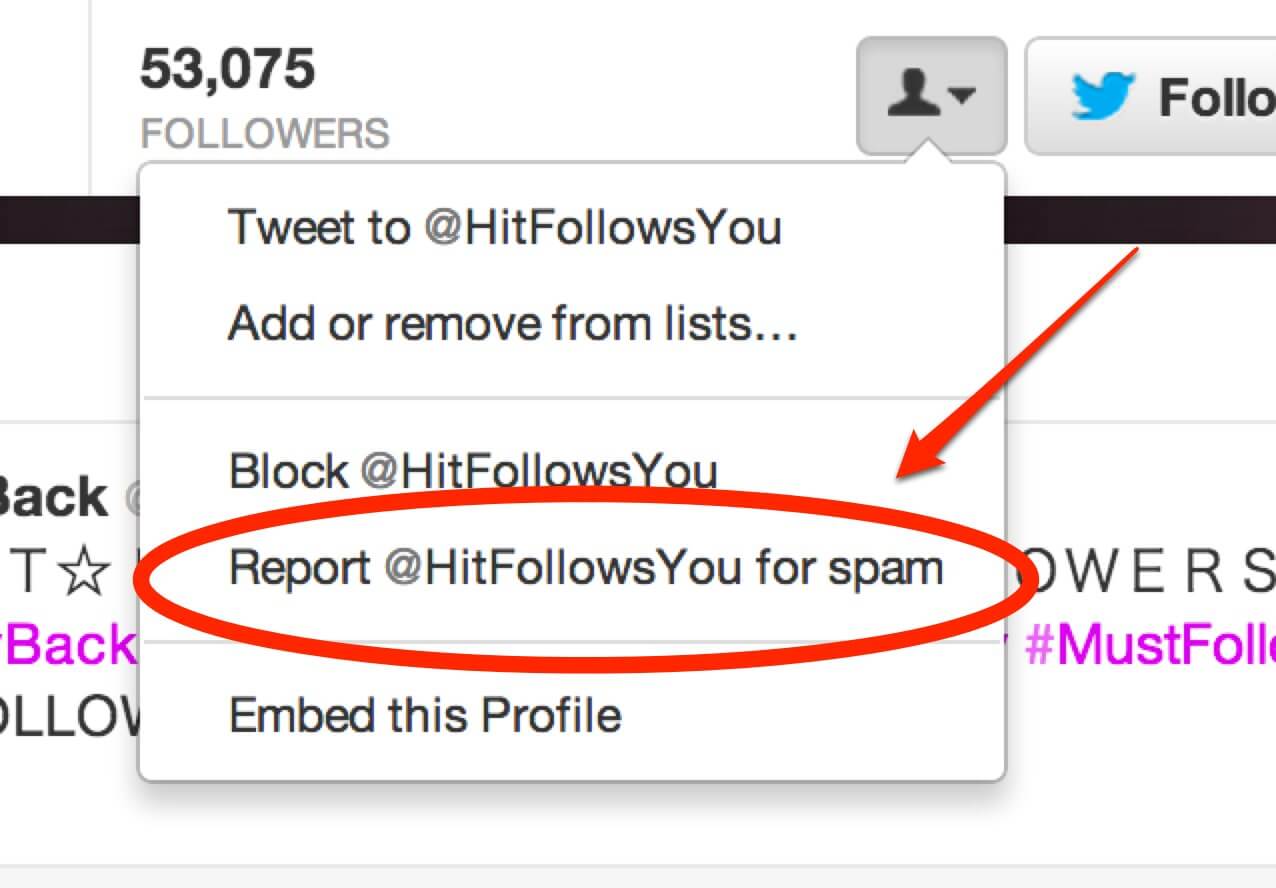

- The method of claim 1, wherein determining whether the post is intentional spam comprises receiving and processing additional signals about the post or a user of a social network that submitted the comment. Clarified: What could these “additional signals” be? Some thoughts are, incomplete profile details, whether their profile pic matches those of other accounts, what that user’s other posts look like, there are many things that could be detected about a user.

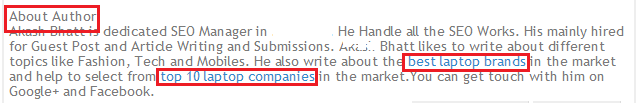

- The method of claim 2, wherein the additional signals include one from the group of a count of identical words weighted by the order that they appear, comparison of word counts across multiple users, links that appear across posts, frequency of links in posts, and ranking or trust score in an online service search index. Clarified: First, check if a post is similar, not necessarily duplicate. Notice it checks the “order that words appear”, that would be unnecessary if Google was only checking if a post was exactly the same. Using the same words (or synonyms) in a different order would be weighted differently, but could still form a positive for spam if it is weighted within a particular range compared to other posts.Frequency of links is huge, it seems to suggest that links in all posts of a user suggests spam, perhaps especially if the links belong to a domain that has received other spam signals. Finally, it talks about a trust score for each user, which could coincide with author rank that Google would like to attribute to individuals. Perhaps if an individual has a higher trust score, Google would be more inclined to think a spammy-looking link is legitimate.

- The method of claim 1, further comprising: identifying a user of a social network that submitted the post; identifying a second digital fingerprint for a second post previously submitted by the identified user; and wherein determining whether the digital fingerprint of the post is similar to the previously stored digital fingerprints of posts comprises determining whether the digital fingerprint of the post is similar to the second digital fingerprint. Clarified: This point seems to imply that, if a user makes two posts in a row, and those two posts are similar, to discount the second post.

- wherein the digital fingerprint is a cryptographic hash of the post, Clarified: Google creates a digital fingerprint of each post. It would make it easier to compare it against literally billions of other posts from multiple users.

wherein processing the post comprises one from the group of: disabling an account of a user; silencing other similar messages from a user; initiating automated review of posts by a user; initiating manual review of posts by a user; rejecting the post; providing the comment as a post only to the author; notifying a poster of rejection of the comment; and posting the comment Clarified: It’s clear to see that this spam detection platform was designed for Google+. However, nothing prevents Google from using it for other User Generated Content (as they state later in the patent), and in that case — instead of directly penalizing a violating user, they can simply discount the links found from that profile.

wherein processing the post comprises one from the group of: disabling an account of a user; silencing other similar messages from a user; initiating automated review of posts by a user; initiating manual review of posts by a user; rejecting the post; providing the comment as a post only to the author; notifying a poster of rejection of the comment; and posting the comment Clarified: It’s clear to see that this spam detection platform was designed for Google+. However, nothing prevents Google from using it for other User Generated Content (as they state later in the patent), and in that case — instead of directly penalizing a violating user, they can simply discount the links found from that profile.

- wherein determining whether the post is the intentional spam comprises: categorizing the post into the categories of likelihood of being spam based on one from the group of on a number of existing duplicate posts from a poster that submitted the post, a timeframe of existing duplicate posts, a user location of the poster, and a duplication of original posts to which the post and a matching post relate, wherein the digital fingerprint of the post matches a previously stored digital fingerprint of the matching post Clarified: here are other methods of determining a post is spam. It includes checking other posts from that user, whether posts were performed within a close proximity time-wise, a location footprint (which would be in Google’s control if we’re talking about Google+ or Blogger), and whether multiple postsrelate to each other. Note that Google has a “Diversity Filter”, discussed in another recent patent, that provides the ability to discount posts that are not diverse. If a post essentially says the same thing as another post (though using different words), Google can completely discard it as not being different enough.

So far, the patent appears to be only discussing Google+. Take note this section of the patent:

The present disclosure is particularly advantageous in a number of respects. First, the spam detector prevents comments that are spam from being posted in a social network. Second, the spam detector can be used with other signals to improve the accuracy of identifying spam. Third, the spam detector can be used for any user generated content to reduce or eliminate spam.

The way that these spam filters are designed would be applicable to any user generated content — which involves most of the internet! What is the take-away from this information, and how can we protect our linking efforts so they are not identified as spam? Following are some thoughts on not being identified as a spammer:

- Automated comment spam. Google’s patent says it can use things such as a digital fingerprint of the words of a post, as well as the links found within a post, to discover spam. Consider how many billions of times the GSA SER default comment spam has been used to generate comments on blogs. Google most certainly has every single comment from GSA SER’s default comment generator databased as a fingerprint. You shouldn’t use that, and you should be very cautious of buying link packages that would use that.

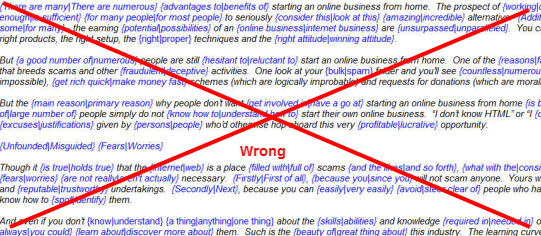

Private blog network posts. Google has another patent that discusses a diversity filter, that essentially negates badly spun content. Whenever a post is rewritten (but uses the same synonyms, and adds nothing new), or when a post is spun (swapping words with synonyms, but essentially saying the same thing), those posts are detected and filtered out by Google’s diversity filter. When adding that to this current patent, that identifies spam based on outgoing links and all the other factors mentioned in this post — you now see why posting low quality content to private blog networks doesn’t achieve much for you, aside from penalties. Make sure that you use unique content, preferably each one is written from scratch and covers different aspects of a topic, in order to post to a private blog network.

Private blog network posts. Google has another patent that discusses a diversity filter, that essentially negates badly spun content. Whenever a post is rewritten (but uses the same synonyms, and adds nothing new), or when a post is spun (swapping words with synonyms, but essentially saying the same thing), those posts are detected and filtered out by Google’s diversity filter. When adding that to this current patent, that identifies spam based on outgoing links and all the other factors mentioned in this post — you now see why posting low quality content to private blog networks doesn’t achieve much for you, aside from penalties. Make sure that you use unique content, preferably each one is written from scratch and covers different aspects of a topic, in order to post to a private blog network.

- Posting content to web2.0 free blogs. Free blogs are a popular way to build links, and there software (such as FCS Networker) that will post articles to a wide variety of free blog platforms. It is important to make the articles you post unique, and not to use badly spun content — essentially you will need to create it of high quality, the same as you would a private blog network.

Drop your subscriptions to spinning software. Use the money you save to start outsourcing for original articles. Don’t get me wrong, my goal isn’t to make you work harder. If it were possible to rank high with push-button software, I would say go for it, and this is coming from someone who has used plenty in the past. However, Google’s diversity filter and detection of spun content makes it so that if you want long term rankings, your content actually has to be unique, and that includes the content linking to you. The easiest way to do this is to hire out content creation. Having your backlinks classified as spam is not a place you want to go.

Drop your subscriptions to spinning software. Use the money you save to start outsourcing for original articles. Don’t get me wrong, my goal isn’t to make you work harder. If it were possible to rank high with push-button software, I would say go for it, and this is coming from someone who has used plenty in the past. However, Google’s diversity filter and detection of spun content makes it so that if you want long term rankings, your content actually has to be unique, and that includes the content linking to you. The easiest way to do this is to hire out content creation. Having your backlinks classified as spam is not a place you want to go.

- Frequency of links in posts. If you’re operating a blog, it wouldn’t normally have one or two outgoing links on every single article. In addition, you can be identified by where you’re linking to. A proper blog, that you’re using to rank your money site, should have outgoing links to many authority sites, in addition to links to your money site. You shouldn’t link to your money site more than once per blog. Not every post should have an outgoing link. The more you link to your money site makes your blog appear more as manipulation of Google SERPs, instead of an unrelated author deciding to link to your site based on the merit of your content.

I know what many of you are thinking. You’ve built so many links using spam techniques. You’re used to thinking in terms of having tens of thousands of links per day. The thought of hiring out to have great articles created, so that you can post them for one backlink here, and one backlink there, seems like a complete waste of time. It’s not a waste of time. When you cover all your bases (including proper on-page SEO, building author rank, getting the highest quality guest blog posts, and using other whitehat linking techniques), you don’t need as many links to rank, because Google actually will start giving your links credit.

Some people think they rank well with spam, but much of this is because Google’s content filters haven’t caught up to them yet. When going the lower quality route, Google doesn’t immediately apply all the filters on your content until they’ve fully analyzed your links. Eventually, they will determine it is spam, and your rankings will fall. If you want a long term rankings, instead of “churn and burn” temporary rankings, you must follow a path of producing higher quality content for your backlinks.

Comments